Web Audio API is an interface for generating and managing audio directly in the browser using JavaScript. This June, The World Wide Web Consortium (W3C) informed that Web Audio API is now an official standard.

We used this interface when working on the Virtual Y project based on Drupal. It was a video-call application for personal training. We needed to develop a sound indication widget that could detect the caller and his interlocutor's sound state, for example, if the microphone is muted, and visualize the volume level changes. We enjoyed using the Web Audio API, and in this article, we're explaining how the API works and describing some of the features it provides.

What was Before the Web Audio API?

Historically, building an audio application was quite a challenging task. There were different possibilities for developers when they wanted to work with sounds in a browser. One of them was the now deprecated Background Sound element <bgsound>. It was meant to make a sound file play in the background while a user was interacting with the page.

Another tool for working with audio was Adobe Flash, a platform for developing and displaying various multimedia and interactive content. Adobe stopped supporting Flash Play at the end of 2020.

In 2014, HTML5 became a standard language of the web and offered the <audio> tag for embedding sound content, among other features. It is supported by most browsers and provides basic features, such as play, pause, fast forward, or volume adjustment. This tool was more usable but still not ideal. The Web Audio API evolves audio handling processes even further. While the Web Audio API predecessors aimed to simply enable playing the recorded audio, this interface concentrated on sound creation and modification and allowed different audio operations.

Basic Concept Behind Web Audio API

Web Audio API is a way of generating audio or processing it in the web browser using JavaScript.

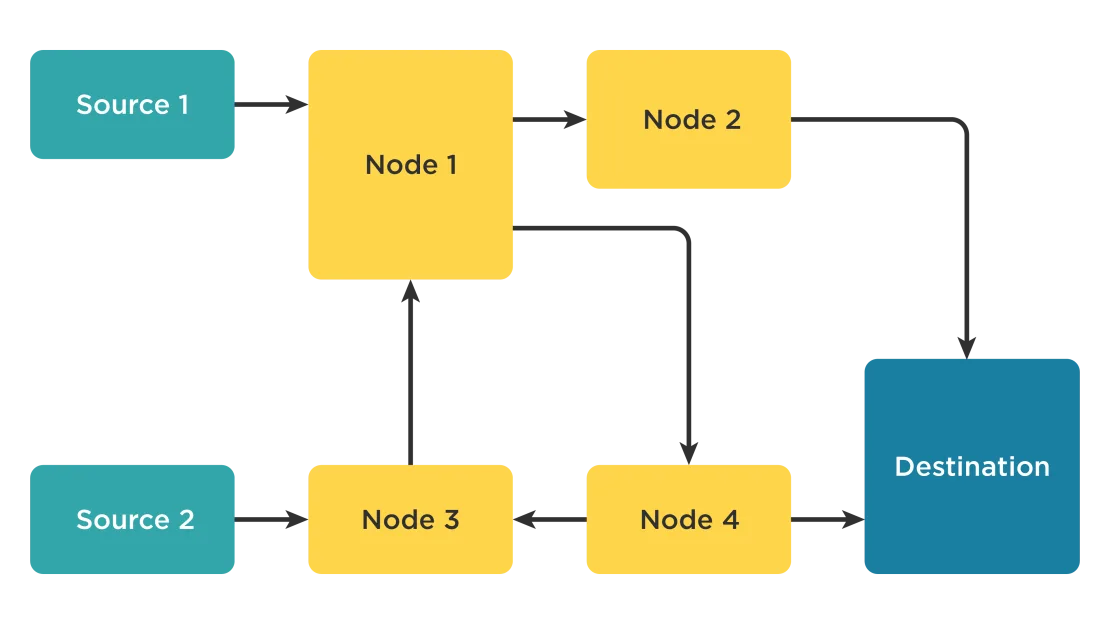

The Web Audio API provides options for handling audio operations inside an audio context. It allows modular routing. Basic audio operations are performed with audio nodes. They are connected via their inputs and outputs. They form a chain that starts with one or more sources, goes through one or more nodes, then ends up at a destination.

Also Read

The Best E-commerce Modules for DrupalWhat tasks can be performed with the Web Audio API?

Here are a couple of examples:

- Generate audio using mathematical algorithms.

- Apply effects for some played audio.

- Create visualization of the generated or played stream.

How can it be done? Let's go through the usual development stages.

Typical Workflow

The common process of working with the Web API may look as follows:

1. Create audio context.

2. Inside the context, create sources to provide audio samples. An audio file, stream or oscillator can act as such sources.

AudioBufferSourceNodeallows retrieving the sound from an audio file.MediaElementAudioSourceNodeallows using audio data that<audio>or<video>tags contain.MediaStreamAudioSourceNodeallows extracting the data from WebRTC streaming.OscillatorNodeallows setting the type of sound generated.

3. Create effects nodes, such as reverb, biquad filter, panner, compressor to modify audio samples.

4. Choose the final destination of audio. It can be system speakers, headphones, or other output devices.

AudioDestinationNoderepresents the end destination of an audio source in a given context, which is usually speakers.MediaStreamAudioDestinationNoderepresents an audio destination consisting of a WebRTCMediaStreamwith a singleAudioMediaStreamTrack, which can be used similarly to aMediaStreamobtained fromNavigator.getUserMedia.

5. Establish connections: the sources should be connected to the effects, and the effects to the destination.

Typical WEB API Workflow

In this case, the real code might look like this:

source1.connect(node1);

source2.connect(node3);

node1.connect(node4);

node1.connect(node2);

node2.connect(destination);

node3.connect(node1);

node4.connect(destination);

node4.connect(node3);Additional Features

Web Audio API provides various practical features. Here are some of them.

Data analysis and visualization

AnalyserNodeinterface comes in handy when there's a need to measure real-time frequency, time-domain, and other information. The extracted data can be used to render audio streaming visualization.

Managing audio channels

ChannelSplitterNodeseparates different channels of an audio source out into a set of mono outputs.ChannelMergerNodereconnects various mono inputs into a single output. Each input will be used to fill a channel of the output.

Audio spatialization

AudioListenerrepresents the position and orientation of the unique user listening to the audio scene used in audio spatialization.PannerNodeshows the behavior of a signal in space. It is anAudioNodeaudio-processing module describing its position with coordinates, its movement using a velocity vector, and directionality.

Audio processing

AudioWorkletNoderepresents anAudioNodeembedded into an audio graph and can pass messages to the correspondingAudioWorkletProcessor.AudioWorkletProcessorrepresents audio processing code running in anAudioWorkletGlobalScopethat generates, processes, or analyses audio directly and can pass messages to theAudioWorkletNode.

Offline/background audio processing

OfflineAudioContextrepresents an audio-processing graph built fromAudioNodeslinked together. AnOfflineAudioContextdoesn't render the audio but instead buffers it as fast as it can.- Complete (event) happens when the rendering of an

OfflineAudioContextis terminated.

Conclusion

When we worked on the "one on one" calls feature for the Virtual Y, we faced the following problem. The interlocutors' video and audio state indications should be showing if the device's mics on both sides are working and transferring the audio stream. It's needed to identify the weak spots in the sound transmission process. First, we implemented a simple "disabled" indication that showed if the audio or video was manually enabled or disabled on the caller side. But such a solution was not good enough to analyze if the problem was with the caller mic or with your headphones, for example.

So the next step was to use the features of the Web Audio API in the stream analysis on the fly and measure the average volume in each frequency range and change the audio indicator size based on this data. After that, it was easy to understand if the mic on your side worked properly.

Have a look at this example. The green audio indicator increases when the clap sound is loud and becomes smaller when it's quiet.

Web Audio API was a groundbreaking solution for creating, processing and modifying audio content in web applications. Now it's also an official standard recommended by W3C. In this post, we showed how to work with this API and reviewed its key features.